WHEN “REAL PARTS” BREAK LEGACY TOOLS

Many metal AM teams recognize that they need simulation software that works not just for research, but for their complex high-value production applications.

Several commercial software packages exist for simulating the AM manufacturing process, but “real parts” expose the painful limits of traditional simulation approaches. The additive manufacturing (AM) industry is moving toward larger, more complex geometries, and these tools simply weren’t designed for this reality. They crash when models get too large, or they only run if you overly simplify the geometry. Even then, acceptable accuracy will typically be impossible to achieve and run times stretch beyond anything usable in a real engineering schedule.

The result is predictable. Teams continue to fall back on trial-and-error prints. That’s precisely why there’s such a strong demand now for simulation tools that can handle extreme complexity accurately, and fast enough to affect decisions before the machine is booked.

WHAT “TOO LARGE, TOO COMPLEX” REALLY MEANS IN 2026

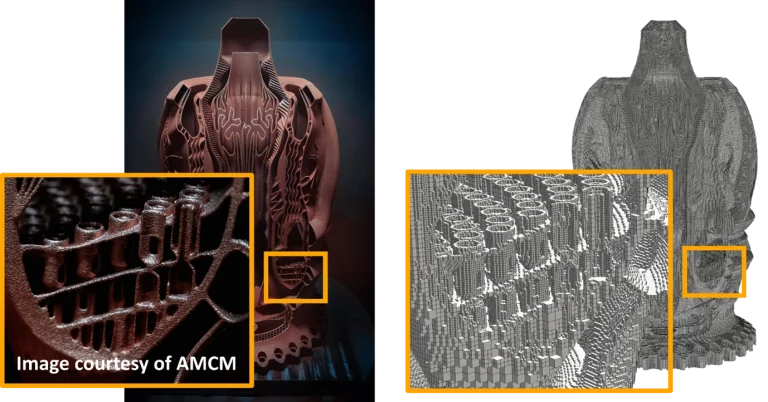

“Too large” isn’t just a bigger bounding box. The real problem is the combination of small features and large volume. Companies leveraging modern AM production systems desire to print 0.2 mm-class features within 1000 mm³-class build envelopes, often on large multi-laser systems.

This is the regime where simulation is most critical. In today’s large-format multi-laser additive production systems like the 4-laser AMCM M 4K (450 x 450 x 1000 mm), the cost of a failed print is unacceptable. This necessitates the use of highly accurate and reliable modelling tools that can resolve all fine details of the geometry while also accounting for the entire build volume, allowing end-users to see and improve the outcome of a print using virtual iterations, rather than experimental trial and error.

WHY FIRST-GENERATION TOOLS HIT A SCALABILITY CEILING

Many legacy tools are adequate for low-fidelity analyses but were architected in ways that fail to address the modern scale of production components. Finite element analysis (FEA) tools require very small elements to capture small features. This is problematic, because a large volume filled with small elements will of course result in a very large number of elements. Legacy solvers have a common practical ceiling of ~5 million elements or ~10M nodes, which forces engineers to defeature or homogenize (neglecting fine features by using larger mesh elements with approximated material properties) to make large models solvable.

This lack of scalability has consequences:

- Most complex parts cannot be meshed or solved at all.

- Parts that can be meshed and solved can take multiple days, or even weeks, to run a simulation. This will not be usable in a production schedule.

- Models that neglect fine detail by defeaturing the part cannot produce meaningful predictions about those fine details.

These reasons are why many organizations quietly stop simulating their hardest parts or never adopted simulation at all. The tools don’t fail because simulation doesn’t add value, they fail because they don’t scale to the current era of additive.

THE PANX TECHNOLOGY STACK BUILT FOR EXTREME COMPLEXITY

PanX takes a different approach, designed for the “too large, too complex” regime from the outset. PanX technologies such as Periodic Adaptivity and Multi-Grid Modelling enable meshing and simulation of FEA models that are 100-1000× (or more) larger than can be handled with traditional simulation approaches, meaning hundreds of millions (and even billions) of elements become feasible with computer hardware that can be purchased today. Importantly, that scale isn’t a hype metric. It enables outcomes that matter:

- Industry-leading distortion and temperature prediction accuracy at mesh resolutions that fully capture thin features within large builds, while also handling the entire build volume and loose powder. These high accuracy predictions can also be used to optimize print outcomes.

- Previously impossible to solve parts can now be simulated in hours, so simulation can guide decisions rather than document them after the fact.

LPBF AT FULL PART SCALE

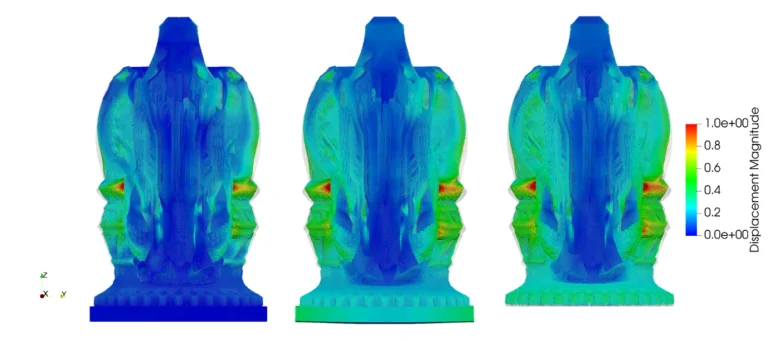

One of the hardest conflicts in LPBF simulation is simple: you must capture small features while being able to complete a simulation run. PanX addresses these conflicts using key technologies that concentrate resolution where it matters, while keeping the solve time practical.

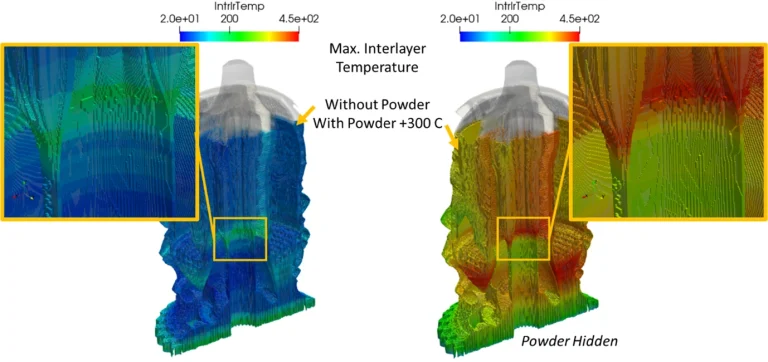

In the above aerospike example, the full part with powder was simulated in 3.5 hours—a case that would not be possible to mesh, let alone solve, in another solver at comparable refinement. The mesh has 26 million elements and 57 million nodes. FEA tools typically need to stay under 5 million elements, which forces simplification that destroys accuracy. The simulation was performed on an Intel Xeon 60-core CPU, using 120 GB of RAM—an engineering workstation-class desktop computer with practical specifications. Furthermore, many PanX simulations can be run on an engineering laptop with only 32-64 GB of RAM.

PanX is positioned for the “large machine era”: capability through 1000 mm³-class machines and beyond, including platforms such as AMCM 8K (800 x 800 x 1200+ mm) and AMCM 4K (450 x 450 x 1000 mm)—contrasted with older generations in the 250–280 mm class.

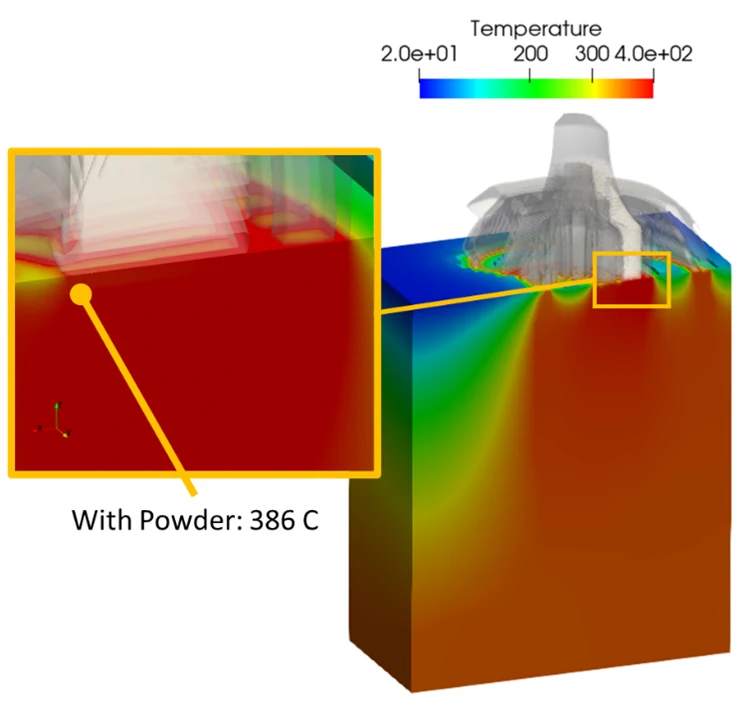

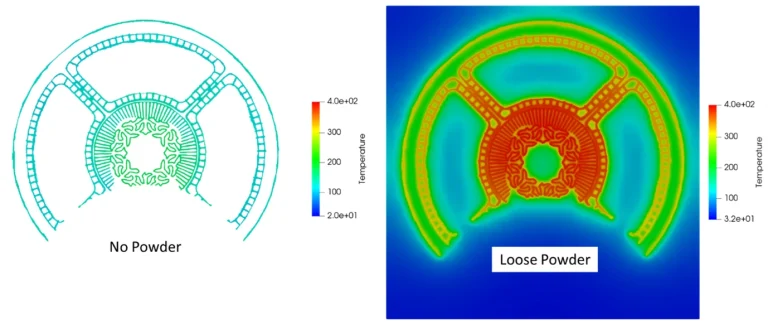

For large builds, simplified heat loss boundary conditions are a harmful shortcut. PanX includes loose powder in the simulation, and explicitly models the build plate, enabling realistic heat transfer and thermal history calculations. Traditional modelling approaches would not be able to mesh the loose powder volume at this scale, because the computational cost would be prohibitive.

A fact that many teams learn the hard way: especially for large builds, powder is hot, and it does matter. Ignoring powder often means you’re simulating a different process than the one you actually run. Powder meaningfully influences heat flow, gradients, and the thermal history of critical features.

In addition to loose powder, it is also critical to have a model capable of simulating the entire build plate and all the parts included on it. The build plate isn’t just a heat sink, it’s part of the mechanical deformation. Plate behaviour impacts both distortion and residual stress.

Workflows without the build plate explicitly modelled provide incorrect results in cases where the build plate deflects during the print and/or after removal of the bolts, which is the case for large format prints.

Simulation is only valuable if it arrives (a) accurate enough to be trusted, and (b) early enough to change decisions.

WHAT TO DO IF YOUR HARDEST PARTS ARE OFF THE TABLE FOR SIMULATION

If your hardest parts are “off the table” for simulation because the solver can’t scale, your toolchain is silently deciding your strategy. The most ambitious metal AM work is not getting smaller or simpler. It’s getting larger and more complex. Simulation is becoming more mission critical than ever to know what will happen and make corrections prior to the first build.

So, here’s a call to action! Come to us with the parts your current tools can’t handle. If your solver can’t mesh it, can’t run it, or can’t produce answers on a usable schedule, you will be impressed by PanX.

In the next posts in this series, we’ll dig deeper into speed as a design lever, qualification-grade simulation, and how optimization changes the role simulation plays in production metal AM.